News

Google explains how pages get ranked even when they are blocked by Robots.txt

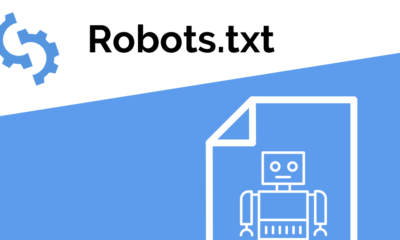

We know that there is a file called robots.txt which is one of the most important files for a webmaster. It is also a guideline on how the Googlebot should scan the website and check the pages. However, you can also block pages from getting indexed inside Search engines if you mention them in Robots.txt file. For this, you can use the noindex tag.

However, we are aware that a page will still get indexed even if it is marked as noindex in the robots.txt file. Now, we were not sure how this would happen. Google’s John Mueller now explains how those pages that are blocked get indexed eventually.

In a hangout session, one webmaster asked Google’s John Mueller about how Google understands the relevancy of a page if it is indexed but blocked from getting indexed. On this, John Mueller says that Google can obviously not look at the contents of that page because of it being blocked. Still, Google finds other ways to compare that URL with other URLs.

However, he also mentions that the priority from Google will remain on pages from that website that are not blocked. But Google will index those blocked pages that it considers are worth getting indexed. The method used by Google to index those pages is via links pointing to them. If Google sees that many links are pointing to a page, it will index the page even if it is blocked.

Here is John Mueller’s answer on this topic:

So, from that point of view, it’s not that trivial. We do sometimes show robotted pages in the search results just because we’ve seen that they work really well. When people link to them, for example, we can estimate that this is probably something worthwhile, all of these things.

So it’s something where, as a site owner, I wouldn’t recommend using robots.txt to block your content and hope that it works out well. But if your content does happen to be blocked by robots.txt we will still try to show it somehow in the search results.”

-

Domains5 years ago

Domains5 years ago8 best domain flipping platforms

-

Business5 years ago

Business5 years ago8 Best Digital Marketing Books to Read in 2020

-

How To's6 years ago

How To's6 years agoHow to register for Amazon Affiliate program

-

How To's6 years ago

How To's6 years agoHow to submit your website’s sitemap to Google Search Console

-

Domains4 years ago

Domains4 years agoNew 18 end user domain name sales have taken place

-

Business5 years ago

Business5 years agoBest Work From Home Business Ideas

-

How To's5 years ago

How To's5 years ago3 Best Strategies to Increase Your Profits With Google Ads

-

Domains4 years ago

Domains4 years agoCrypto companies continue their venture to buy domains