News

Google answers about partial and total site deindexing

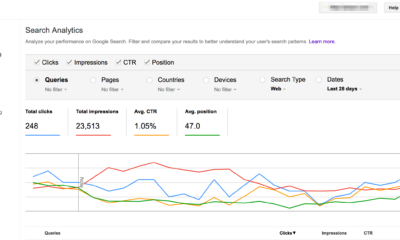

Google’s Mueller recently came up with the answer for the quest from someone who asked about site deindexing. It led to the loss of their ranking.

John Mueller is offering a list of the technical issues that can cause Google to remove their website from search results. The good part about this question and answer is that Mueller is discussing two kinds of deindexing. There is a fast deindexing and a slow deindexing.

SEO office-hours hangout is not the place to ask about the diagnosis for a specific kind of website. Hence it is quite reasonable that Mueller did not give the person a direct answer which is specific to their website.

The question he asked is, “I own a site, and it was ranking good before the 23rd of March. I upgraded from Yoast SEO… free to premium. After that, the site went deindexed from Google, and we lost all our keywords.”

The person noted that for the past few days, the keywords kept returning to the search results for a few hours. Then it tends to disappear. They said that they have checked the Robots.txt and also went for checking the sitemaps. Also, they verified that there were not any kind of manual penalties.

However, he didn’t mention checking if the web pages are containing a Robots Noindex meta tag or not.

Mueller started his answer with the speculation that deindexing neither connect to update the Yoast plugin from the free to the premium version. According to him, it is much reasonable to start with the Yoast plugin and look for the settings.

However, the user is having no idea about the cause. According to Mueller, it is a good practice not to dismiss anything without checking it. Thus, he disagrees with dismissing the Yoast SEO Plugin upgrade as a cause without checking it even before ruling it out.

Mueller also offered insights about the site deindexing process with a long indexing scenario. Here the parts of a site slowly deindexed as Google refused to consider them relevant.

Mueller next discusses a slow deindexing of parts for the site, but not for the entire site. Rather he describes partial deindexing. However, the person’s problem was about the total site deindexing.

If a site is deindexed, it is good to check not only the Robots.txt file but also the source code for individual pages. There are many reasons why a site can get de-indexed beyond the robots.txt and robots meta tag. Hacking or technical issues also need to be investigated.

-

Domains6 years ago

Domains6 years ago8 best domain flipping platforms

-

Business6 years ago

Business6 years ago8 Best Digital Marketing Books to Read in 2020

-

How To's7 years ago

How To's7 years agoHow to register for Amazon Affiliate program

-

How To's7 years ago

How To's7 years agoHow to submit your website’s sitemap to Google Search Console

-

Domains5 years ago

Domains5 years agoNew 18 end user domain name sales have taken place

-

Business6 years ago

Business6 years agoBest Work From Home Business Ideas

-

How To's6 years ago

How To's6 years ago3 Best Strategies to Increase Your Profits With Google Ads

-

Domains5 years ago

Domains5 years agoCrypto companies continue their venture to buy domains

You must be logged in to post a comment Login